This post explores the use of Livewire’s polling and event mechanisms to accumulate and maintain ordering of groupable data across table pages. If you want to keep your polling up to speed, deploy your Laravel application close to your users, across regions, with Fly.io—you can be up and running in a jiffy!

In this post we’ll craft ourselves a hassle-free ordering of groupable data across table pages, and land our users a lag-free pagination experience.

In order to do so, we’ll use Livewire’s polling feature to accumulate ordered data, and keep a client-side-paginated table up to date with polling results through Livewire’s event mechanism.

Does that sound exciting, horrifying, or both?

Any of the above’s a great reason to read on below!

Following Along

You can check out our full repository here, clone it, and make some pull requests while you’re at it!

Afterwards, connect to your preferred database, and execute a quick php artisan migrate:fresh --seed to get set up with data.

If you’re opting out of the repository route, you’ll need a Laravel project that has both Livewire and Tailwind configured.

Make sure you have a groupable dataset with you so you can see the magic of our approach in maintaining grouped data order across pages.

Run php artisan make:livewire article-table to create your Livewire component, and you’re free to dive in below.

Version Notice. This article focuses on Livewire v2, details for Livewire v3 would be different though!

Paginating carefully arranged data

What’s a good approach in displaying a meticulously arranged list of data?

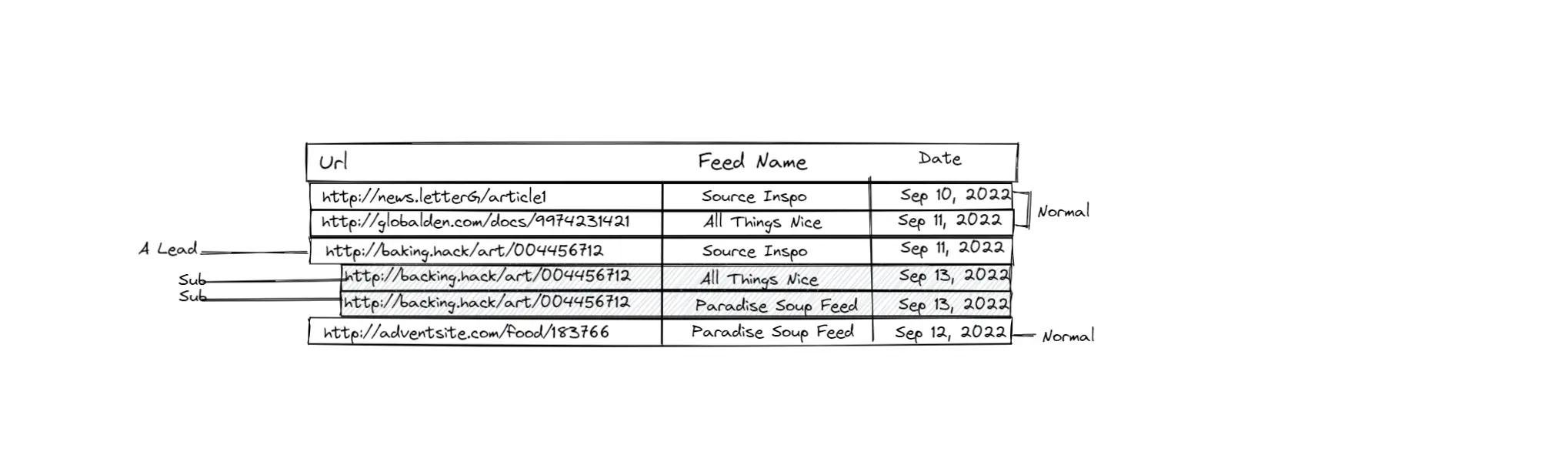

Let’s say we receive and record “bookmarks"—article links—from news feeds we follow. These bookmarks can be grouped together based on some logic manually or automatically imposed. As an example, we may encounter exactly the same bookmark from two or more different feeds. If we’re allowing duplicate bookmarks from different feeds, then it makes sense to group these similar bookmarks together, like so:

|

|---|

| A table containing records of bookmarks received from various feed sources. These bookmarks are groupable together, containing a LEAD row and its Sub rows. |

Grouping table rows that don’t sequentially come together is tricky. As seen from the image above, rows in a group don’t necessarily come in the same day, nor do they get saved one after the other. Amidst this absence of natural ordering in our database, we’ll have to carefully arrange our data every time we want to display them.

Furthermore, we’ll need to paginate our data to avoid bottlenecks from database retrieval, processing, and client data download when working with humongous data sets.

|

|---|

| A group of bookmark rows spans from the current page( nineth and tenth rows ) to the next page( first row ). |

Pagination while maintaining order of data in different pages of a table can become complicated only too quickly. We’ll have to make sure that what we’ve shown in previous pages are still there when users return, and especially maintain ordering of groups that encompasses more than one page.

Client Side Pagination

—with a side dish of data accumulation.

|

|---|

| This table initially held 51 bookmark rows. As background processes poll for more data, the number of rows it contains eventually reach the total record of 457 rows. |

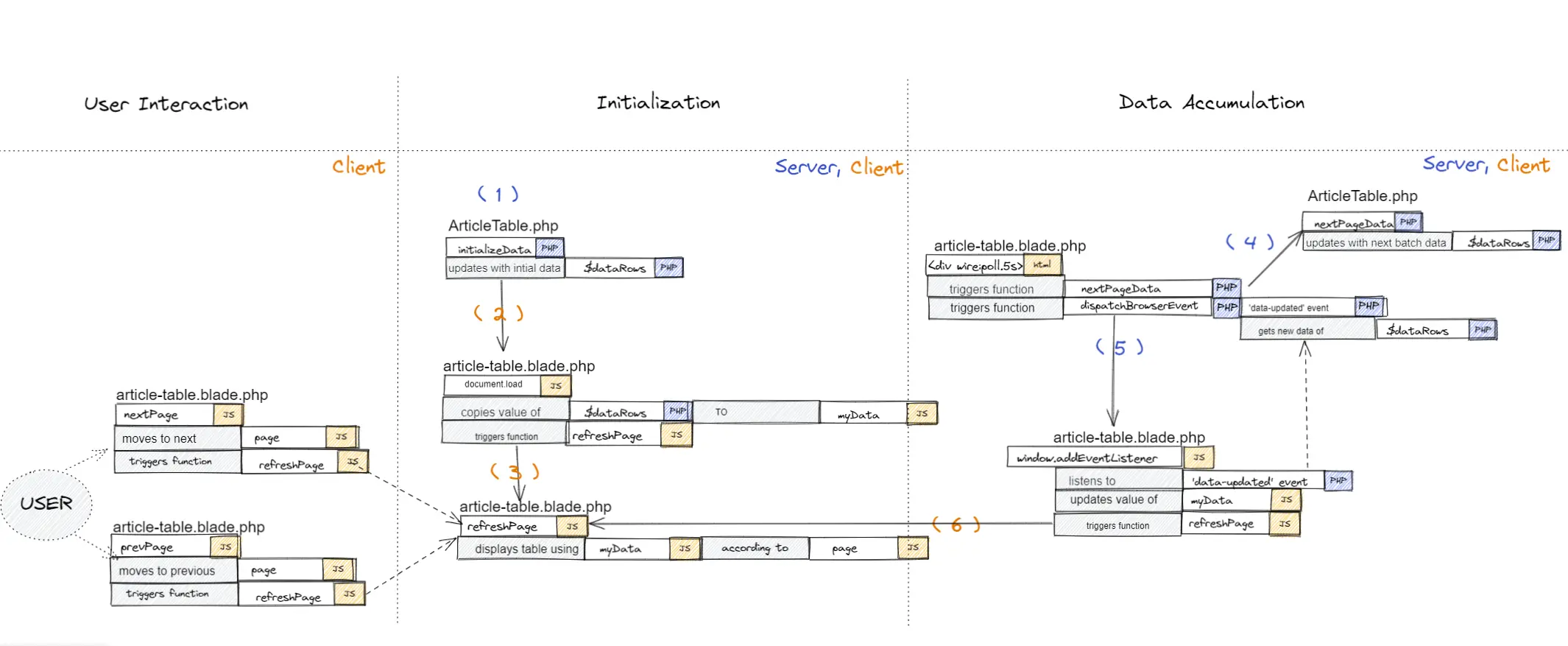

With Livewire, we can easily set up a table component with public attributes keeping track of the list of items, accumulating data over time with their ordering intact.

Accumulating data means we’ll have an evolving list of properly ordered data. Therefore, we can simply rely on displaying indices specific to a page and not worry about grouping logic during pagination.

Another added benefit of our approach is client-side pagination. Livewire smoothly integrates JavaScript listeners with server-side events, allowing our rendered page’s JavaScript to easily update our client’s accumulated data.

As a result, our users’ interaction can rely solely on the data accumulated, without any calls to our server for data—all thanks to the silent accumulation provided by Livewire:poll.

|

|---|

| This diagram separates the flow of initialization, data accumulation, and user interaction between the Livewire component’s controller and view |

Below, we’ll set up our Livewire controller’s public attributes and functionalities to retrieve and accumulate ordered data. Then on the last step, we’ll update our Livewire view to display and refresh our accumulated data with events and polling.

Controller

Let’s make some changes to our /app/http/Livewire/ArticleTable.php:

# /App/Http/Livewire/ArticleTable.php

class ArticleTable extends Component

{

// List that accumulates data over time

public $dataRows;

// This is total number of data rows for reference,

// Also used as a reference to stop polling once reached

public $totalRows;

// Used for querying next batch of data to retrieve

public $pagination;

public $lastNsId;

// Override this to initialize our table

public function mount()

{

$this->pagination = 10;

$this->initializeData();

}

Polling Accumulation

For smooth sailing for our users, we’ll set up a client-side paginated table that allows table interaction to be lag-free.

We’ll initially query the necessary rows to fit the first page in the client’s table—with a pinch of allowance. This should be a fast enough query that takes less than a second to retrieve from the database, and the size of the data returned shouldn’t be big enough to cause a bottleneck in the page loading.

What makes this initial data retrieval especial is the extra number of rows it provides from what is initially displayed. We get double the first page display, so there is an allowance of available data for next page intearction.

/**

* Initially let's get double the first page data

* to have a smooth next page feel

* while we wait for the first poll result

*/

public function initializeData()

{

$noneSubList = $this->getBaseQuery()

->limit($this->pagination*2)

->get();

$this->addListToData( $noneSubList );

}

/**

* Gets the base query

*/

public function getBaseQuery()

{

// Quickly refresh the $totalRows every time we check with the db

$this->totalRows = Article::count();

// Return none-Sub rows to avoid duplicates in our $dataRows list

return Article::whereNull('lead_article_id');

}

Then in order to get more data into the table, Livewire from the frontend can quietly keep adding items to the table through polling one of the controller’s public functions: nextPageData.

/**

* For every next page,

* we'll get data after our last reference

*/

public function nextPageData()

{

$noneSubList = $this->getBaseQuery()

->where('id','>',$this->lastNsId)

->limit($this->pagination*10)

->get();

$this->addListToData( $noneSubList );

}

Add in our core functionality for ordering our data retrieved: get possible sub rows for the data result, merge data inclusive of their sub rows in proper ordering to our $dataRows.

/**

* 1. Get possible Sub rows for the list of data retrieved in nextPageData or initializeData

* 2. Merge list of data inclusive of their possible Sub rows, in proper ordering, to the accumulated $dataRows

* 3. Update the $lastNsId reference for our nextPage functionality

*/

public function addListToData($noneSubList)

{

$subList = $this->getSubRows($noneSubList);

foreach( $noneSubList as $item ){

$this->dataRows[] = $item;

$this->lastNsId = $item->id;

foreach( $subList as $subItem){

if( $subItem->lead_article_id == $item->id ){

$this->dataRows[] = $subItem;

}

}

}

}

/**

* Get the Sub rows for the given none-Sub list

*/

private function getSubRows($noneSubList)

{

$idList = [];

foreach($noneSubList as $item){

$idList[] = $item->id;

}

return Article::whereIn('lead_article_id', $idList)->get();

}

View

Once we have our Livewire controller set up, let’s bring in some color to our Livewire-component view, /app/resources/views/livewire/article-table.blade.php:

<table>

<thead>...</thead>

{{-- wire:ignore helps to not reload this tbody for every update done on our $dataRows --}}

<tbody id="tbody" wire:ignore></tbody>

</table>

<nav role="navigation" aria-label="Pagination Navigation" class="flex justify-between" >

<button onclick="prevPage()">Prev</button>

<button onclick="nextPage()">Next</button>

</nav>

What makes our setup pretty cool is that pagination will be done strictly client-side. This means user interaction is available only on data the UI has access to—meaning from the JavaScript side of things—and consequently, a lag-free pagination experience for our users!

Client-Side Pagination

To display our data, we initialize all variables we need to keep track of from the JavaScript side. This includes a reference to our table element, some default pagination details, and finally a myData variable to easily access the data we received from $dataRows.

Go ahead and add in a <script> section to our Livewire-component view in /app/resources/views/livewire/article-table.blade.php:

<script>

// Reference to table element

var mTable = document.getElementById("myTable");

// Transfer $dataRows to a JavaScript variable for easy use

var myData = JSON.parse('<?php echo json_encode($dataRows) ?>');

// Default page for our users

var page = 1;

var startRow = 0;

// Let's update our table element with data

refreshPage();

Then set up a quick JavaScript function that will display myData rows in our tbody based on the current page.

function refreshPage()

{

// Let's clear some air

document.getElementById("tbody").innerHTML = '';

// Determine which index/row to start the page with

startRow = calculatePageStartRow(page);

// Add rows to the tbody

for(let row=startRow; row<myData.length && row<startRow+10; row++){

let item = myData[row];

var rowTable = mTable.getElementsByTagName('tbody')[0].insertRow(-1);

// Coloring scheme to differentiate Sub rows

if(item['lead_article_id']!=null){

rowTable.className = "pl-10 bg-gray-200";

var className = "pl-10";

}else

var className = "";

var cell1 = rowTable.insertCell(0);

var cell2 = rowTable.insertCell(1);

var cell3 = rowTable.insertCell(2);

var cell4 = rowTable.insertCell(3);

cell1.innerHTML = '<div class="py-3 '+className+' px-6 flex items-center">' + item['url'] + '</div>';

cell2.innerHTML = '<div class="py-3 '+className+' px-6 flex items-center">' + item['source'] + '</div>';

cell3.innerHTML = '<div class="py-3 '+className+' px-6 flex items-center">' + item['id'] + '</div>';

cell4.innerHTML = '<div class="py-3 '+className+' px-6 flex items-center">' + item['lead_article_id'] + '</div>';

}

}

Along with functionality to allow movement from one page to the next and back:

function nextPage()

{

if( calculatePageStartRow( page+1 ) < myData.length ){

page = page+1;

refreshPage();

}

}

function prevPage()

{

if( page > 1 ){

page = page-1;

refreshPage();

}

}

function calculatePageStartRow( mPage )

{

return (mPage*10)-10;

}

Discreetly add in our accumulation of remaining data with the help of Livewire’s magical polling feature that can eventually stop once we’ve reached maximum rows:

@if( count($dataRows) < $totalRows )

<div wire:poll.5s>

Loading more data...

{{ $this->nextPageData() }}

{{ $this->dispatchBrowserEvent('data-updated', ['newData' => $dataRows]); }}

</div>

@endif

And finally, in response to the dispatchBrowserEvent above, let’s create a JavaScript listener to refresh our myData list and re-render our table rows—just in case the current page still has available slots for rows to show.

window.addEventListener('data-updated', event => {

myData = event.detail.newData;

refreshPage();

});

And that’s it! We’re done, in less than 300 lines of logic!

We now have our easy-to-maintain, ordered listing of groupable data, paginated in a table. We boast a client-side-only pagination that promotes a lag-free experience for our users’ navigation interaction.

Fly.io ❤️ Laravel

Fly.io is a great way to run your Laravel Livewire app close to your users. Deploy globally on Fly in minutes!

Deploy your Laravel app! →

Retrospect

If there’s one treasure that should stay with us from our journey today, it’s this:

Learning comes from retrospect.

—Don’t be afraid to look up the past: it’s where we’ve all been through, it’s how we learn from our actions, and it’s what drives us forward to new, exciting roads—heading towards our next destination.

Take a deep breath. Let’s recap what we’ve been through today:

Problem:

Grouping rows and displaying them across pages in a table can get complicated thanks to the obligation of maintaining order of groupings across pages.

Solution:

Livewire provides its polling mechanism to call server functions periodically. It also gives out this marvelous integration of JavaScript listeners with PHP Livewire events.

To solve the complexity of grouping rows in a paginated table, we learned that we can initially have a short list of ordered data—hopefully with some allowance.

Afterwards we just needed to keep the table’s data bustling and updated with Livewire's polling mechanism to keep user-interaction strictly in the client side of things.

For the last puzzle piece of our setup, we used Livewire's JavaScript event listener to update data in our client page’s table after every completed poll done from the background.

With data accumulation happening in the background, we were able to implement our user-table interaction purely on client-side JavaScript, eliminating any server-induced next-page data-request lag.

The approach we’ve implemented is not perfect by any means. It has benefits and drawbacks:

Benefits:

- Data accumulation reduces complexity while maintaining the order of data with pagination.

- User interaction is sublimely performant because it only deals with local data.

Drawbacks:

- Because polling happens automagically in the background until we complete our entire dataset, we risk unnecessary queries to the server for the duration our user has the page actively opened.

- Livewire sends back all public attributes with every call. The closer our dataset gets to completion, the larger the data returned by the server.

Experiment!

Maybe the polling bit until all data was consumed was a bit overwhelming—might have given some of us a spike to the good-old blood pressure, it really depends on the circumstances of our application, and our personal health. But, see—and I hope you see this—with Livewire, there’s just tons of ways that our user-table interaction can evolve!

- Want to remove the polling part but keep the client-side pagination? Keep the data accumulation, but replace polling with extra allowance from our next page—show the next 10 rows, but append 20 rows to our accumulated list!

- Want to add search and sorting? You can opt with working on the current accumulated data as usual, if that’s a bit too naivet, how about a refresh of the list?

The fun part in the two experiments you can try out above is you can still keep the pagination client-side.

Let’s wrap up our journey here

I hope you learned a thing or two from this blog post. If you have any questions, concerns, or constructively kind criticism, be sure to let me know!

I’m always up for conversation and just a tweet post away.