Fly.io runs apps close to users by taking containers and upgrading them to full-fledged virtual machines running on our own hardware around the world. We’re also building an open-source distributed file system for SQLite called LiteFS which is pretty cool too. Give us a whirl and get up and running quickly.

Full-stack developers are sleeping on SQLite, a database most devs think more suited to unit tests than production loads. That’s true enough for some apps. Most web apps are read-heavy, though, and we can use that to our advantage. With the right tooling, SQLite makes for faster, simpler web apps.

To understand why we won’t shut up about SQLite, think about latency. You have a budget of around 100ms to make an app feel snappy. Individual Postgres queries add milliseconds of latency. Apps often run multiple queries before responding to users. Database round trips can take a big bite out of a latency budget.

The same problem infects your full-stack code. Developing against a relational database requires devs to watch out for “N+1” query patterns, where a query leads to a loop that leads to more queries. N+1 queries against Postgres and MySQL can be lethal to performance. Not so much for SQLite.

The challenge of building full-stack on SQLite is that it isn’t client-server: it’s a library that runs inside of your app. In the past, that’s made it hard to get durability and replication. Most devs aren’t comfortable with a “single-server” architecture, where any downtime in your app server takes your whole app down.

But you don’t need to make peace with single-server designs to take advantage of SQLite. Earlier this year, we wrote about why we’re all in on Litestream. Litestream is SQLite’s missing disaster recovery system: it’s a sidecar process that hooks into SQLite’s journaling and copies database pages to object stores such as S3. Like SQLite itself, it has the virtue of being easy to get your head around; we explained most of the design in a single blog post, and using it just takes a couple commands.

We want to see how far we can push this model, and so we’ve been working on something new.

LiteFS: Where We’re Going We Don’t Need Database Servers

At least, not as such.

LiteFS extends the idea of Litestream with fine-grained transactional control. Where Litestream simply copies the raw SQLite WAL file, LiteFS can inspect and ship individual transactions, which span pages, and are the true unit of change in a SQL database.

SQLite imposes on us a constraint that makes this transactional control harder: SQLite is baked into the apps that use it. If you build something that changes the SQLite library itself, you’re not building tooling; you’re building a new database. And we’re not interested in getting people to switch to a new flavor of SQLite.

There’s two options for intercepting the file system API in SQLite:

- Use the Virtual File System (VFS) abstraction in SQLite.

- Build a FUSE file system.

The VFS option is easier so, naturally, we chose to build a FUSE file system. That’s how you’re supposed to do it, right?

LiteFS works by interposing a very thin virtual filesystem between your app and your on-disk database file. It’s not a file system like ext4, but rather a pass-through. Think of it as a file system proxy. What that proxy does is track SQLite databases to spot transactions and then LiteFS copies out those transactions to be shipped to replicas.

In the default journaling mode, transactions are easy to identify: a write transaction starts when the -journal file is created, and ends when it’s deleted. The journal stores the page numbers and old page data and we can look up the new page data from the main database file.

You see where this is going. SQLite’s exquisitely documented file format makes it easy for LiteFS to replicate whole databases. Now we’ve got transaction boundaries. So we roll those transactions up into a simple file format we call LTX. LiteFS replicas can replay those transactions back to recreate the current (or any previous) transaction state of a LiteFS-tracked SQLite database — without touching app code. It seems like magic, but it’s a natural consequence of SQLite’s strong design.

Ok, so why didn’t you write a VFS instead?

First off, we have nothing against the SQLite VFS system—it’s great! We’re planning on also releasing LiteFS as a VFS with a super catchy name like… LiteVFS.

If you’re unfamiliar with VFSes, they serve as an abstracted file system API. In fact, you use them all the time since SQLite ships with two built-in VFS modules: one for Unix & one for Windows. You can also load a third-party VFS as an extension, however, therein lies the first problem. There’s an extra step to use it. Every time someone needs to use the database, they have to remember to load the VFS. That includes when your application runs but also when you just load up the sqlite3 CLI.

LiteFS also needs to run an API server to replicate data between nodes. This gets complicated if you have multiple processes on a single machine trying to access the same local database. Which one runs the API server?

The FUSE file system solves many of these usability issues by being a single point that all database calls go through. Once you mount it, there’s no additional steps to remember and any number of processes can use it just like a regular file system.

What LiteFS Can Do Today

LiteFS’ roots are in Litestream which was built with a simple purpose: keep your data safe on S3. However, it still ran with a single-server architecture which poses two important limitations.

First, if your one server goes down during a deploy, your application stops. That sucks.

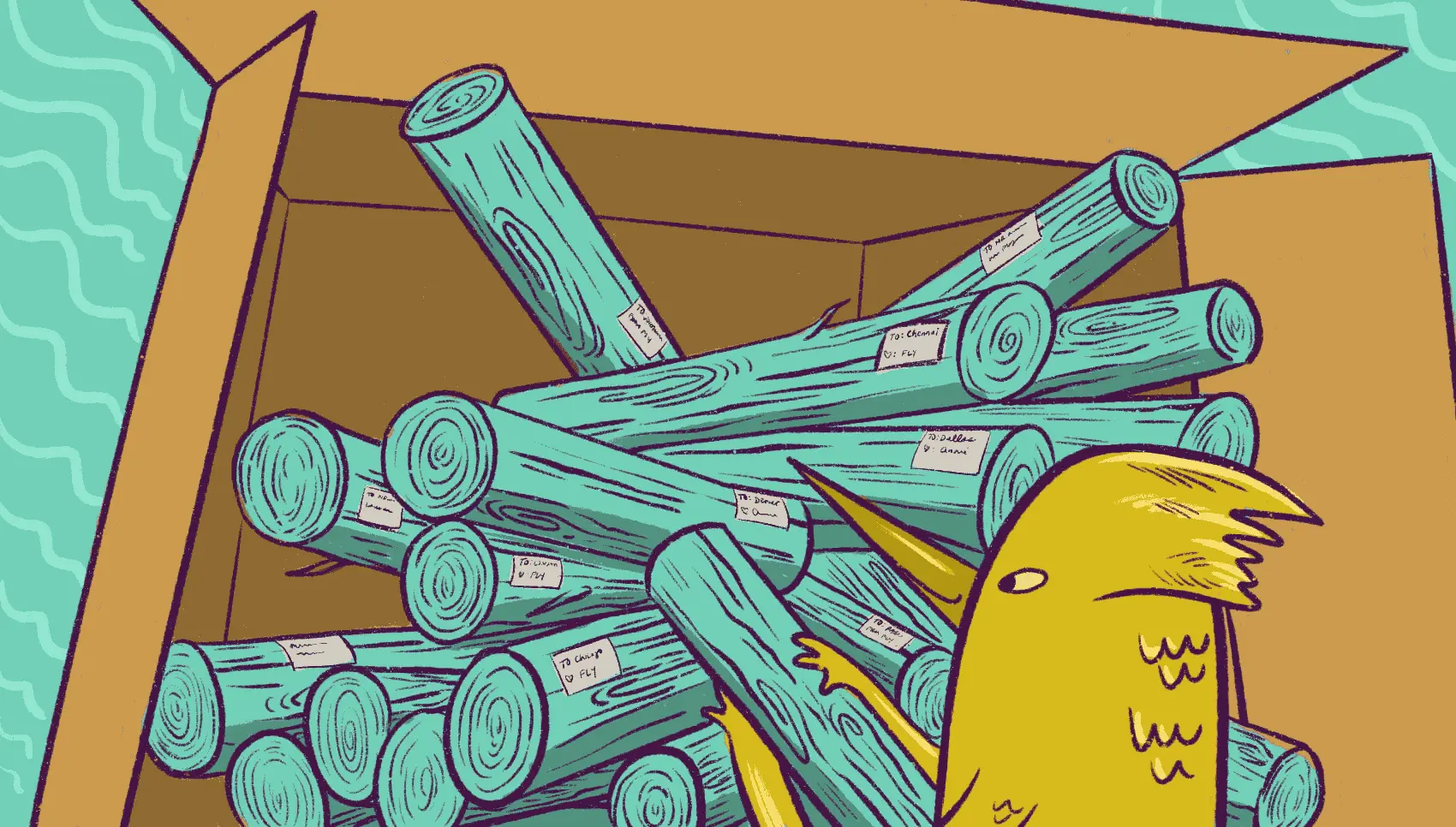

Second, your application can only serve requests from that one server. If you fired up your server in Dallas then that’ll be snappy for Texans. But your users in Chennai will be cursing your sluggish response times since there’s a 250ms ping time between Texas & India.

LiteFS aims to fix these limitations.

To improve availability, it uses leases to determine the primary node in your cluster. By default, it uses Hashicorp’s Consul.

With Consul, any node marked as a candidate can become the primary node by obtaining a time-based lease and is the sole node that can write to the database during that time. This fits well in SQLite’s single-writer paradigm. When you deploy your application and need to shut down the primary, that node can release its lease and the “primary” status will instantly move to another node.

To improve latency, we’re aiming at a scale-out model that works similarly to Fly Postgres. That’s to say: writes get forwarded to the primary and all read requests get served from their local copies. Most app requests are reads, and those reads can be served lightning fast from in-core SQLite replicas anywhere in your deployment.

But wait, that’s not all! There are many ways to do replication and each application has its own needs around data access. LiteFS also lets you use a static primary node if you don’t want to use Consul.

We even have more topologies in the works. We’ve had suggestions from the community to support other approaches like primary-initiated replication. That would allow folks to stream real-time database updates to customers outside their network instead of customers connecting in. Kinda niche, but cool.

Split Brain Detection

LiteFS uses asynchronous replication between a loose membership of ephemeral nodes. It trades some durability guarantees for performance and operational simplicity that can make sense for many applications.

It’s able to do this because the primary election through Consul is dynamic and self-healing, which is again both the good and the bad news. Because dynamic topologies can have weird failure modes, LiteFS is designed defensively: we maintain a checksum for the entire state of the database and include it in each LTX file. This sounds expensive, but we can maintain it incrementally.

We’re able to maintain this checksum by calculating the checksum for each page and XOR'ing the results together:

chksum = 0

FOREACH page

chksum = chksum XOR crc64(page.number, page.data)

END

When a transaction changes pages in the database, we’ll start with the checksum of the previous LTX file, remove the old checksums for the changed pages, and add in the new checksums for the changed pages:

chksum = prev_chksum

FOREACH page

chksum = chksum XOR crc64(page.number, page.old_data)

chksum = chksum XOR crc64(page.number, page.new_data)

END

Since XOR operations are commutative, we can even checksum across compacted LTX files or checksum the current state of the database. We can do this in LiteFS because we have fine-grained control over the file system writes.

These database checksums ensure that an LTX file cannot be applied out of order and corrupt your database: they ensure byte-for-byte consistency for all the underlying data. We verify these on startup so that every database must be in a consistent state relative to its LTX checksum.

Where We’re Heading With This

We think LiteFS has a good shot at offering the best of both n-tier database designs like Postgres and in-core databases like SQLite. In a LiteFS deployment, the parts of your database that really want to be networked are networked, but heavy lifting of the data itself isn’t.

It’s not just about performance. If you’re using a database server like Postgres or MySQL today, chances are you’re using a “managed” database service, where some other team is making sure your database is up and running. Everybody uses managed services because keeping database servers happy is annoying. With SQLite, there’s not as much stuff that can break.

And we’ll keep saying this: the reason we think LiteFS and full-stack SQLite is a good bet is that the design is simple. You can read a summary of the LiteFS design and understand what each of these components is doing. SQLite is one of of the most trusted libraries in the world; most of our job is just letting SQLite be SQLite. Your app doesn’t even need to know LiteFS is there.

We’re plowing ahead on LiteFS features. Here are a few big ones to look out for:

WAL-mode Support: today, LiteFS works with SQLite’s default rollback journal mode. But WAL mode is where it’s at with modern SQLite. The FUSE proxy model works fine here too: transactions start with a write to the -wal file, and end with another write that marks a header with the commit field set.

Write Forwarding: SQLite works with a single-writer, multiple-reader model and our primary/replica replication mimics that. However, it adds friction to require developers to forward writes to the primary node. Instead, we’re making it so any node can perform a write and then forward that transaction data to the primary. The primary can then replicate it out to the rest of the cluster.

S3 Replication: running a cluster of LiteFS nodes significantly improves your durability over a single-server deployment. However, nothing gives quite the same warm fuzzy feeling as tucking away a copy of your database in object storage. This will work similarly to Litestream, however, LiteFS’ LTX files are built to be efficiently compacted so restoring a point-in-time copy of your database will be nearly instant.

Encryption: we want developers to feel safe keeping SQLite replicas on services like S3. So we’ve designed an AEAD encryption scheme that fits into LiteFS naturally and ensures that even if you manage to expose your LTX files to the Internet, you won’t have exposed any plaintext.

Try It Out

After several months of work, we’re comfortable calling LiteFS beta-ready. We’d be happy if you played around with it.

We’ve set up a documentation site for LiteFS so you can get going with it and understand how it works. The easiest way to get up and running is to walk through our Getting Started with LiteFS guide. It only takes about 10 minutes and you’ll have a globally-distributed SQLite application running. Crazy, right!?

LiteFS is completely open source, developed in the open, and in no way locked into Fly.io, which invests resources in this solely because we are nerds about SQLite and not in any way because LiteFS is part of a secret plan to take over the world. Pinky-swear!