Imagine if you could auto scale simply by wrapping any existing app code in a function and have that block of code run in a temporary copy of your app.

The pursuit of elastic, auto-scaling applications has taken us to silly places.

Serverless/FaaS had a couple things going for it. Elastic Scale™ is hard. It’s even harder when you need to manage those pesky servers. It also promised pay-what-you-use costs to avoid idle usage. Good stuff, right?

Well the charade is over. You offload scaling concerns and the complexities of scaling, just to end up needing more complexity. Additional queues, storage, and glue code to communicate back to our app is just the starting point. Dev, test, and CI complexity balloons as fast as your costs. Oh, and you often have to rewrite your app in proprietary JavaScript – even if it’s already written in JavaScript!

At the same time, the rest of us have elastically scaled by starting more webservers. Or we’ve dumped on complexity with microservices. This doesn’t make sense. Piling on more webservers to transcode more videos or serve up more ML tasks isn’t what we want. And granular scale shouldn’t require slicing our apps into bespoke operational units with their own APIs and deployments to manage.

Enough is enough. There’s a better way to elastically scale applications.

The FLAME pattern

Here’s what we really want:

- We don’t want to manage those pesky servers. We already have this for our app deployments via

fly deploy,git push heroku,kubectl, etc - We want on-demand, granular elastic scale of specific parts of our app code

- We don’t want to rewrite our application or write parts of it in proprietary runtimes

Imagine if we could auto scale simply by wrapping any existing app code in a function and have that block of code run in a temporary copy of the app.

Enter the FLAME pattern.

FLAME - Fleeting Lambda Application for Modular Execution

With FLAME, you treat your entire application as a lambda, where modular parts can be executed on short-lived infrastructure.

No rewrites. No bespoke runtimes. No outrageous layers of complexity. Need to insert the results of an expensive operation to the database? PubSub broadcast the result of some expensive work? No problem! It’s your whole app so of course you can do it.

The Elixir flame library implements the FLAME pattern. It has a backend adapter for Fly.io, but you can use it on any cloud that gives you an API to spin up an instance with your app code running on it. We’ll talk more about backends in a bit, as well as implementing FLAME in other languages.

First, lets watch a realtime thumbnail generation example to see FLAME + Elixir in action:

Now let’s walk through something a little more basic. Imagine we have a function to transcode video to thumbnails in our Elixir application after they are uploaded:

def generate_thumbnails(%Video{} = vid, interval) do

tmp = Path.join(System.tmp_dir!(), Ecto.UUID.generate())

File.mkdir!(tmp)

args = ["-i", vid.url, "-vf", "fps=1/#{interval}", "#{tmp}/%02d.png"]

System.cmd("ffmpeg", args)

urls = VidStore.put_thumbnails(vid, Path.wildcard(tmp <> "/*.png"))

Repo.insert_all(Thumb, Enum.map(urls, &%{vid_id: vid.id, url: &1}))

end

Our generate_thumbnails function accepts a video struct. We shell out to ffmpeg to take the video URL and generate thumbnails at a given interval. We then write the temporary thumbnail paths to durable storage. Finally, we insert the generated thumbnail URLs into the database.

This works great locally, but CPU bound work like video transcoding can quickly bring our entire service to a halt in production. Instead of rewriting large swaths of our app to move this into microservices or some FaaS, we can simply wrap it in a FLAME call:

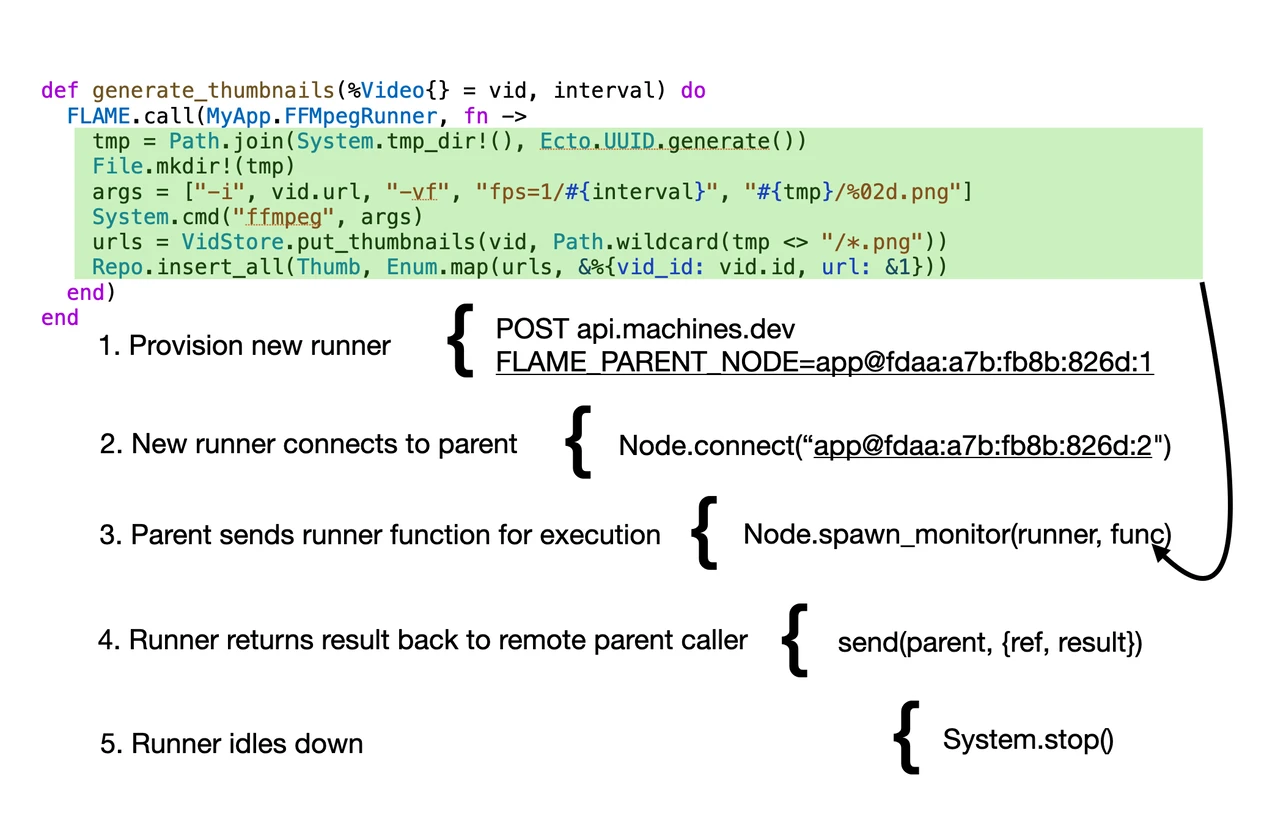

def generate_thumbnails(%Video{} = vid, interval) do

FLAME.call(MyApp.FFMpegRunner, fn ->

tmp = Path.join(System.tmp_dir!(), Ecto.UUID.generate())

File.mkdir!(tmp)

args =

["-i", vid.url, "-vf", "fps=1/#{interval}", "#{tmp}/%02d.png"]

System.cmd("ffmpeg", args)

urls = VidStore.put_thumbnails(vid, Path.wildcard(tmp <> "/*.png"))

Repo.insert_all(Thumb, Enum.map(urls, &%{vid_id: vid.id, url: &1}))

end)

end

That’s it! FLAME.call accepts the name of a runner pool, and a function. It then finds or boots a new copy of our entire application and runs the function there. Any variables the function closes over (like our %Video{} struct and interval) are passed along automatically.

When the FLAME runner boots up, it connects back to the parent node, receives the function to run, executes it, and returns the result to the caller. Based on configuration, the booted runner either waits happily for more work before idling down, or extinguishes itself immediately.

Let’s visualize the flow:

We changed no other code and issued our DB write with Repo.insert_all just like before, because we are running our entire application. Database connection(s) and all. Except this fleeting application only runs that little function after startup and nothing else.

In practice, a FLAME implementation will support a pool of runners for hot startup, scale-to-zero, and elastic growth. More on that later.

Solving a problem vs removing the problem

FaaS solutions help you solve a problem. FLAME removes the problem.

The FaaS labyrinth of complexity defies reason. And it’s unavoidable. Let’s walkthrough the thumbnail use-case to see how.

We try to start with the simplest building block like request/response AWS Lambda Function URL’s.

The complexity hits immediately.

We start writing custom encoders/decoders on both sides to handle streaming the thumbnails back to the app over HTTP. Phew that’s done. Wait, is our video transcoding or user uploads going to take longer than 15 minutes? Sorry, hard timeout limit – time to split our videos into chunks to stay within the timeout, which means more lambdas to do that. Now we’re orchestrating lambda workflows and relying on additional services, such as SQS and S3, to enable this.

All the FaaS is doing is adding layers of communication between your code and the parts you want to run elastically. Each layer has its own glue integration price to pay.

Ultimately handling this kind of use-case looks something like this:

- Trigger the lambda via HTTP endpoint, S3, or API gateway ($)

- Write the bespoke lambda to transcode the video ($)

- Place the thumbnail results into SQS ($)

- Write the SQS consumer in our app (dev $)

- Persist to DB and figure out how to get events back to active subscribers that may well be connected to other instances than the SQS consumer (dev $)

This is nuts. We pay the FaaS toll at every step. We shouldn’t have to do any of this!

FaaS provides a bunch of offerings to build a solution on top of. FLAME removes the problem entirely.

FLAME Backends

On Fly.io infrastructure the FLAME.FlyBackend can boot a copy of your application on a new Machine and have it connect back to the parent for work within ~3s.

By default, FLAME ships with a LocalBackend and FlyBackend, but any host that provides an API to provision a server and run your app code can work as a FLAME backend. Erlang and Elixir primitives are doing all the heavy lifting here. The entire FLAME.FlyBackend is < 200 LOC with docs. The library has a single dependency, req, which is an HTTP client.

Because Fly.io runs our applications as a packaged up docker image, we simply ask the Fly API to boot a new Machine for us with the same image that our app is currently running. Also thanks to Fly infrastructure, we can guarantee the FLAME runners are started in the same region as the parent. This optimizes latency and lets you ship whatever data back and forth between parent and runner without having to think about it.

Look at everything we’re not doing

With FaaS, just imagine how quickly the dev and testing story becomes a fate worse than death.

To run the app locally, we either need to add some huge dev dependencies to simulate the entire FaaS pipeline, or worse, connect up our dev and test environments directly to the FaaS provider.

With FLAME, your dev and test runners simply run on the local backend.

Remember, this is your app. FLAME just controls where modular parts of it run. In dev or test, those parts simply run on the existing runtime on your laptop or CI server.

Using Elixir, we can even send a file across to the remote FLAME application thanks to the distributed features of the Erlang VM:

def generate_thumbnails(%Video{} = vid, interval) do

parent_stream = File.stream!(vid.filepath, [], 2048)

FLAME.call(MyApp.FFMpegRunner, fn ->

tmp_file = Path.join(System.tmp_dir!(), Ecto.UUID.generate())

flame_stream = File.stream!(tmp_file)

Enum.into(parent_stream, flame_stream)

tmp = Path.join(System.tmp_dir!(), Ecto.UUID.generate())

File.mkdir!(tmp)

args =

["-i", tmp_file, "-vf", "fps=1/#{interval}", "#{tmp}/%02d.png"]

System.cmd("ffmpeg", args)

urls = VidStore.put_thumbnails(vid, Path.wildcard(tmp <> "/*.png"))

Repo.insert_all(Thumb, Enum.map(urls, &%{vid_id: vid.id, url: &1}))

end)

end

On line 2 we open a file on the parent node to the video path. Then in the FLAME child, we stream the file from the parent node to the FLAME server in only a couple lines of code. That’s it! No setup of S3 or HTTP interfaces required.

With FLAME it’s easy to miss everything we’re not doing:

- We don’t need to write code outside of our application. We can reuse business logic, database setup, PubSub, and all the features of our respective platforms

- We don’t need to manage deploys of separate services or endpoints

- We don’t need to write results to S3 or SQS just to pick up values back in our app

- We skip the dev, test, and CI dependency dance

FLAME outside Elixir

Elixir is fantastically well suited for the FLAME model because we get so much for free like process supervision and distributed messaging. That said, any language with reasonable concurrency primitives can take advantage of this pattern. For example, my teammate, Lubien, created a proof of concept example for breaking out functions in your JavaScript application and running them inside a new Fly Machine: https://github.com/lubien/fly-run-this-function-on-another-machine

So the general flow for a JavaScript-based FLAME call would be to move the modular executions to a new file, which is executed on a runner pool. Provided the arguments are JSON serializable, the general FLAME flow is similar to what we’ve outlined here. Your application, your code, running on fleeting instances.

A complete FLAME library will need to handle the following concerns:

- Elastic pool scale-up and scale-down logic

- Hot vs cold startup with pools

- Remote runner monitoring to avoid orphaned resources

- How to monitor and keep deployments fresh

For the rest of this post we’ll see how the Elixir FLAME library handles these concerns as well as features uniquely suited to Elixir applications. But first, you might be wondering about your background job queues.

What about my background job processor?

FLAME works great inside your background job processor, but you may have noticed some overlap. If your job library handles scaling the worker pool, what is FLAME doing for you? There’s a couple important distinctions here.

First, we reach for these queues when we need durability guarantees. We often can turn knobs to have the queues scale to handle more jobs as load changes. But durable operations are separate from elastic execution. Conflating these concerns can send you down a similar path to lambda complexity. Leaning on your worker queue purely for offloaded execution means writing all the glue code to get the data into and out of the job, and back to the caller or end-user’s device somehow.

For example, if we want to guarantee we successfully generated thumbnails for a video after the user upload, then a job queue makes sense as the dispatch, commit, and retry mechanism for this operation. The actual transcoding could be a FLAME call inside the job itself, so we decouple the ideas of durability and scaled execution.

On the other side, we have operations we don’t need durability for. Take the screencast above where the user hasn’t yet saved their video. Or an ML model execution where there’s no need to waste resources churning a prompt if the user has already left the app. In those cases, it doesn’t make sense to write to a durable store to pick up a job for work that will go right into the ether.

Pooling for Elastic Scale

With the Elixir implementation of FLAME, you define elastic pools of runners. This allows scale-to-zero behavior while also elastically scaling up FLAME servers with max concurrency limits.

For example, lets take a look at the start/2 callback, which is the entry point of all Elixir applications. We can drop in a FLAME.Pool for video transcriptions and say we want it to scale to zero, boot a max of 10, and support 5 concurrent ffmpeg operations per runner:

def start(_type, _args) do

flame_parent = FLAME.Parent.get()

children = [

...,

MyApp.Repo,

{FLAME.Pool,

name: Thumbs.FFMpegRunner,

min: 0,

max: 10,

max_concurrency: 5,

idle_shutdown_after: 30_000},

!flame_parent && MyAppWeb.Endpoint

]

|> Enum.filter(& &1)

opts = [strategy: :one_for_one, name: MyApp.Supervisor]

Supervisor.start_link(children, opts)

end

We use the presence of a FLAME parent to conditionally start our Phoenix webserver when booting the app. There’s no reason to start a webserver if we aren’t serving web traffic. Note we leave other services like the database MyApp.Repo alone because we want to make use of those services inside FLAME runners.

Elixir’s supervised process approach to applications is uniquely great for turning these kinds of knobs.

We also set our pool to idle down after 30 seconds of no caller operations. This keeps our runners hot for a short while before discarding them. We could also pass a min: 1 to always ensure at least one ffmpeg runner is hot and ready for work by the time our application is started.

Process Placement

In Elixir, stateful bits of our applications are built around the process primitive – lightweight greenthreads with message mailboxes. Wrapping our otherwise stateless app code in a synchronous FLAME.call‘s or async FLAME.cast’s works great, but what about the stateful parts of our app?

FLAME.place_child exists to take an existing process specification in your Elixir app and start it on a FLAME runner instead of locally. You can use it anywhere you’d use Task.Supervisor.start_child , DynamicSupervisor.start_child, or similar interfaces. Just like FLAME.call, the process is run on an elastic pool and runners handle idle down when the process completes its work.

And like FLAME.call, it lets us take existing app code, change a single LOC, and continue shipping features.

Let’s walk through the example from the screencast above. Imagine we want to generate video thumbnails for a video as it is being uploaded. Elixir and LiveView make this easy. We won’t cover all the code here, but you can view the full app implementation.

Our first pass would be to write a LiveView upload writer that calls into a ThumbnailGenerator:

defmodule ThumbsWeb.ThumbnailUploadWriter do

@behaviour Phoenix.LiveView.UploadWriter

alias Thumbs.ThumbnailGenerator

def init(opts) do

generator = ThumbnailGenerator.open(opts)

{:ok, %{gen: generator}}

end

def write_chunk(data, state) do

ThumbnailGenerator.stream_chunk!(state.gen, data)

{:ok, state}

end

def meta(state), do: %{gen: state.gen}

def close(state, _reason) do

ThumbnailGenerator.close(state.gen)

{:ok, state}

end

end

An upload writer is a behavior that simply ferries the uploaded chunks from the client into whatever we’d like to do with them. Here we have a ThumbnailGenerator.open/1 which starts a process that communicates with an ffmpeg shell. Inside ThumbnailGenerator.open/1, we use regular elixir process primitives:

# thumbnail_generator.ex

def open(opts \\ []) do

Keyword.validate!(opts, [:timeout, :caller, :fps])

timeout = Keyword.get(opts, :timeout, 5_000)

caller = Keyword.get(opts, :caller, self())

ref = make_ref()

parent = self()

spec = {__MODULE__, {caller, ref, parent, opts}}

{:ok, pid} = DynamicSupervisor.start_child(@sup, spec)

receive do

{^ref, %ThumbnailGenerator{} = gen} ->

%ThumbnailGenerator{gen | pid: pid}

after

timeout -> exit(:timeout)

end

end

The details aren’t super important here, except line 10 where we call {:ok, pid} = DynamicSupervisor.start_child(@sup, spec), which starts a supervisedThumbnailGenerator process. The rest of the implementation simply ferries chunks as stdin into ffmpeg and parses png’s from stdout. Once a PNG delimiter is found in stdout, we send the caller process (our LiveView process) a message saying “hey, here’s an image”:

# thumbnail_generator.ex

@png_begin <<137, 80, 78, 71, 13, 10, 26, 10>>

defp handle_stdout(state, ref, bin) do

%ThumbnailGenerator{ref: ^ref, caller: caller} = state.gen

case bin do

<<@png_begin, _rest::binary>> ->

if state.current do

send(caller, {ref, :image, state.count, encode(state)})

end

%{state | count: state.count + 1, current: [bin]}

_ ->

%{state | current: [bin | state.current]}

end

end

The caller LiveView process then picks up the message in a handle_info callback and updates the UI:

# thumb_live.ex

def handle_info({_ref, :image, _count, encoded}, socket) do

%{count: count} = socket.assigns

{:noreply,

socket

|> assign(count: count + 1, message: "Generating (#{count + 1})")

|> stream_insert(:thumbs, %{id: count, encoded: encoded})}

end

The send(caller, {ref, :image, state.count, encode(state)} is one magic part about Elixir. Everything is a process, and we can message those processes, regardless of their location in the cluster.

It’s like if every instantiation of an object in your favorite OO lang included a cluster-global unique identifier to work with methods on that object. The LiveView (a process) simply receives the image message and updates the UI with new images.

Now let’s head back over to our ThumbnailGenerator.open/1 function and make this elastically scalable.

- {:ok, pid} = DynamicSupervisor.start_child(@sup, spec)

+ {:ok, pid} = FLAME.place_child(Thumbs.FFMpegRunner, spec)

That’s it! Because everything is a process and processes can live anywhere, it doesn’t matter what server our ThumbnailGenerator process lives on. It simply messages the caller with send(caller, …) and the messages are sent across the cluster if needed.

Once the process exits, either from an explicit close, after the upload is done, or from the end-user closing their browser tab, the FLAME server will note the exit and idle down if no other work is being done.

Check out the full implementation if you’re interested.

Remote Monitoring

All this transient infrastructure needs failsafe mechanisms to avoid orphaning resources. If a parent spins up a runner, that runner must take care of idling itself down when no work is present and handle failsafe shutdowns if it can no longer contact the parent node.

Likewise, we need to shutdown runners when parents are rolled for new deploys as we must guarantee we’re running the same code across the cluster.

We also have active callers in many cases that are awaiting the result of work on runners that could go down for any reason.

There’s a lot to monitor here.

There’s also a number of failure modes that make this sound like a harrowing experience to implement. Fortunately Elixir has all the primitives to make this an easy task thanks to the Erlang VM. Namely, we get the following for free:

- Process monitoring and supervision – we know when things go bad. Whether on a node-local process, or one across the cluster

- Node monitoring – we know when nodes come up, and when nodes go away

- Declarative and controlled app startup and shutdown - we carefully control the startup and shutdown sequence of applications as a matter of course. This allows us to gracefully shutdown active runners when a fresh deploy is triggered, while giving them time to finish their work

We’ll cover the internal implementation details in a future deep-dive post. For now, feel free to poke around the flame source.

What’s Next

We’re just getting started with the Elixir FLAME library, but it’s ready to try out now. In the future look for more advance pool growth techniques, and deep dives into how the Elixir implementation works. You can also find me @chris_mccord to chat about implementing the FLAME pattern in your language of choice.

Happy coding!

–Chris